Ensuring Cloudformation package only updates Lambda that have been modified

How do I ensure Lambda build consistency?

How do I prevent my Lambda from updating at each deployment?

TL;DR

Fix your timestamps! Choose some date (2010-01-01 for exemple) and ensure that your code packaging always happen after you fix all atime and mtime to that fixed date.

# Change the atime and mtime of all non-hidden files and folders in the current path

find . -not -path '*/.*' -exec touch -a -m -t"201001010000.00" {} \;Introduction

If you deploy Serverless architectures on AWS, you probably use AWS Lambda heavily. Because you are, of course, a DevSecOps apologist, you probably use CICD tools to automatically deploy your changes whenever you commit them in your Git repository, relying either on Terraform or on CloudFormation.

Ideally, when you make a change on a specific part of your serverless application, you would like your IaC tool to only redeploy such Lambda functions that have been changed. But you probably noticed that each time you make a release and repackage your Lambda functions, Terraform or CloudFormation wants to redeploy each and every one of them, regardless of whether they actually changed or not.

If you recognize a familiar and annoying situation in this little introduction, you are in the right place. My friend, this problem will soon be in your past.

First I will explain why the problem arises, using what I’m most familiar with as an example: CloudFormation in a CodeBuild/CodePipeline environment (you should be able to relate even if you use other tools). Then I will give you the incredibly simple solution I came up with.

Why is it happening?

The basics of how it works

When you use CloudFormation to deploy Lambda, you can take advantage of the aws cloudformation package CLI command. The principle is simple: you declare your Lambda resource and reference a local path to the code instead of an S3 URL:

ExampleLambda:

Type: AWS::Lambda::Function

Properties:

FunctionName: example-lambda

Handler: index.lambda_handler

Runtime: python3.9

Description: Example lambda

Code: lambdas/example-lambda-code-folder

MemorySize: 128

Timeout: 10The path you write is relative to the path of the template file.

Then, in your build script, you can use the CLI:

aws cloudformation package --template-file template.yaml --s3-bucket artifact-bucket --output-template-file modified-template.yamlThis command will “magically”:

- Seek local paths for Lambda code in the

template.yamlfile - Make a zip archive of the designated folder

- Upload the zip archive in the S3 bucket

artifact-bucketif it has changed - Replace the local path with the appropriate S3 URI in a new template file (

modified-template.yamlin our example command)

It actually works beyond Lambda resources (but unfortunately not for everything), see https://docs.aws.amazon.com/cli/latest/reference/cloudformation/package.html for an exhaustive list of supported resources.

In order to detect if the Lambda code actually changed and needs to be updated, the CLI does the following :

- It creates the zip package of the supplied local path, no matter what

- It computes the S3 object key based on the MD5 checksum of the zip

- If the key already exists in S3, then it means that the archive has not changed and was already uploaded.

Furthermore, as long as the Zip archive is the same, its MD5 checksum and the computed S3 path do not change, so the Lambda resource in the generated CloudFormation template is identical. Therefore CloudFormation has no reason to update your Lambda.

When things start to go south…

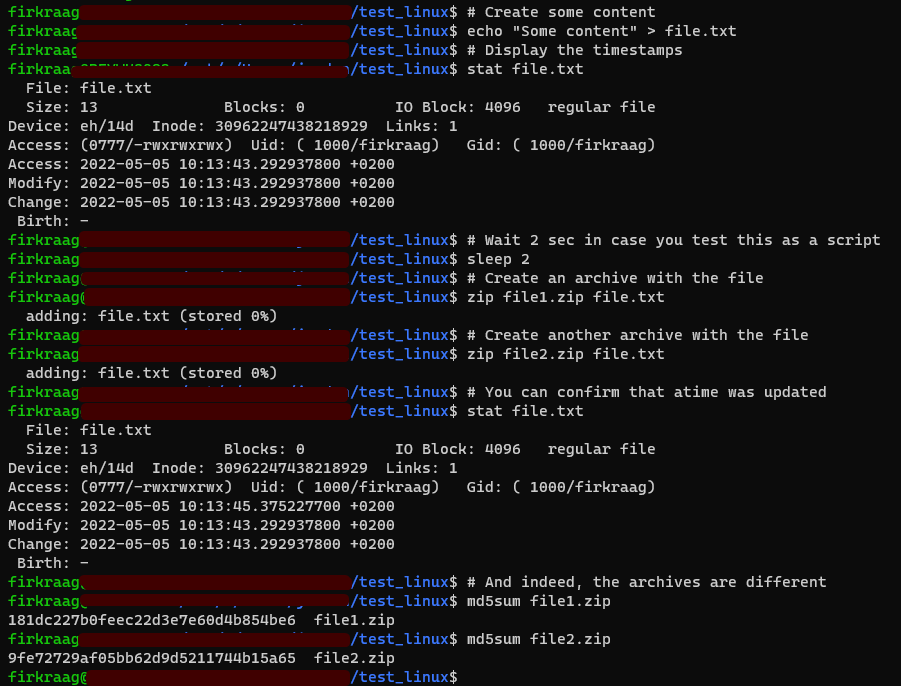

The key thing to understand is that a Zip archive includes the timestamps (atime and mtime) of the files. If your filesystem is updating the atime (Access time), it will be very difficult to generate an identical archive because the creation of the archive is, in itself, a file access. So the simple act of zipping your files will update their atime, therefore if you recreate your zip archive without changing anything, it will be different. This can be easily demonstrated with this simple script on a filesystem with atime activated:

# Create some content

echo "Some content" > file.txt

# Display the timestamps

stat file.txt

# Wait 2 sec to ensure atime will be different

sleep 2

# Create an archive with the file (it updates the atime)

zip file1.zip file.txt

# Create another archive with the file

zip file2.zip file.txt

# You can confirm that atime was indeed updated

stat file.txt

# And indeed, the archives are different

md5sum file?.zip

But most of the time, atime is not activated on the filesystem (for performance reasons and to preserve the lifespan of SSDs, but that is another story). So it is most likely that if you execute the previous script, you will see no update of atime with the stat command, and your archives will be identical (same MD5 checksum).

So when atime is not activated, everything is alright, right? The mtime of a file will only change if you modify its content (or touch it) so it is ok that the Zip archive is not the same, right?

Enter Git and the CICD pipeline.

Git does not care for file timestamps. It does not store them and therefore they are lost when you clone a repository or checkout a branch. And what is your CICD pipeline doing? Essentially it clones your repository in a fresh environment (usually a container) in order to execute some build commands. Every atime and mtime are set at the time of the clone or checkout.

And here is our problem: each new build will be a new clone/checkout, so files will have different timestamps in each build, so Zip archives will always be different, no matter if the file contents actually changed or not!

And it all cascades: different zip archives, different MD5 checksums, different S3 paths for the artefacts, changes in the final CloudFormation templates and there you go. CloudFormation will update every single lambda, every single time.

How do you prevent it? How to ensure Lambda build consistency?

Legends say that generations of DevOps tried desperately to prevent Zip from storing the timestamps, but all those brave souls failed and eventually got mad. I was myself one of those brave souls.

But I recently had an epiphany, hence the blog post 🙂

Why try to prevent timestamps storage when we all know that we can manipulate the timestamps at will using touch?

The solution is in fact very simple: in our build scripts, we just have to make sure to fix the timestamps at some given date before constructing the packages!

Behold! The solution:

# Change the atime and mtime of all non-hidden files and folders in the current path

find . -not -path '*/.*' -exec touch -a -m -t"201001010000.00" {} \;You just have to insert that in your build script, right before you create your Zip packages. In my case, I execute this line before calling the aws cloudformation package command. And, that’s it! It works, plain and simple.

Note that here I set the timestamps to 2010-01-01 00:00:00 but you can choose whatever you want, as long as it is fixed and after 1980 (Zip does not support previous dates for some reason).

To conclude this blog post, here is the little script you can use to convince yourself that you are not dreaming, and that it actually works (no matter if atime is active on your filesystem):

# Create some content

echo "Some content" > file.txt

# Fix the timestamps

touch -t201001010000.00 file.txt && stat file.txt

# Create an archive with the file

zip file1.zip file.txt

# Update the mtime without modifying the file

touch -m file.txt && stat file.txt

# Create another archive

zip file2.zip file.txt

# Set the timestamps once again

touch -t201001010000.00 file.txt && stat file.txt

# Create a last archive with the file

zip file3.zip file.txt

# md5sum will confirm that the first and last archives are the same

# but the second one is different because its mtime changed

md5sum file?.zipIf you were already convinced, this script does not bring you anything new, but it demonstrates the impact of changing the mtime of the file on the checksum of the Zip archive.

Finally, you will notice that touch cannot change the ctime, but Zip does not care for ctime so it’s ok.

Even if the blog post relies on my experience with aws cloudformation package I believe that the same kind of problem happens with Terraform for the exact same reasons, though it may be possible that you use a Terraform module that offsets the problems with additional code. For example, I saw modules that compute all the individual checksums of the individual files before Zipping. It works, but I still believe that the solution I propose is cool, because it is so simple.